Growing up, my family had a simple rule. If you’re going to talk abut about a problem, you also have to have a solution in mind. By my parents’ logic, talking about a problem and no solution was just whining and whining never fixes anything. My various life experiences have only proved my parents right.

When it comes to a problem that may be an existential threat to the human race, though, I think a little whining can be forgiven. However, that shouldn’t negate the importance of having a solution in mind before we lose ourselves to endless despair.

For the threat posed by artificial intelligence, though, solutions have been light on substance and heavy on dread. It’s becoming increasingly popular among science enthusiasts and Hollywood producers to highlight just how dangerous this technology could be if it goes wrong.

I don’t deny that danger. I’ve discussed it before, albeit in a narrow capacity. I would agree with those who claim that artificial intelligence could potentially be more destructive than nuclear weapons. However, I believe the promise this technology has for bettering the human race is worth the risk.

That said, how do we mitigate that risk when some of the smartest, most successful people in the world dread its potential? Well, I might not be as smart or as successful, but I do believe there is a way to maximize the potential of artificial intelligence while minimizing the risk. That critical solution, as it turns out, may have already been surmised in a video game that got average-to-good reviews last year.

Once again, I’m referring to one of my favorite video games of all time, “Mass Effect.” I think it’s both fitting and appropriate since I referenced this game in a previous article about the exact moment when artificial intelligence became a threat. That moment may be a ways off, but there may also be away to avoid it altogether.

Artificial intelligence is a major part of the narrative within the “Mass Effect” universe. It doesn’t just manifest through the war between the Quarians and the Geth. The game paints it as the galactic equivalent of a hot-button issue akin to global warming, nuclear proliferation, and super plagues. Given what happened to the Quarians, that concern is well-founded.

That doesn’t stop some from attempting to succeed where the Quarians failed. In the narrative of “Mass Effect: Andromeda,” the sequel to the original trilogy, a potential solution to the problem of artificial intelligence comes from the father of the main characters, Alec Ryder. That solution even has a name, SAM.

That name is an acronym for Simulated Adaptive Matrix and the principle behind it actually has some basis in the real world. On paper, SAM is a specialized neural implant that links a person’s brain directly to an advanced artificial intelligence that is housed remotely. Think of it as having Siri in your head, but with more functionality than simply managing your calendar.

In the game, SAM provides the main characters with a mix of guidance, data processing, and augmented capabilities. Having played the game multiple times, it’s not unreasonable to say that SAM is one of the most critical components to the story and the gameplay experience. It’s also not unreasonable to say it has the most implications of any story element in the “Mass Effect” universe.

That’s because the purpose of SAM is distinct from what the Quarians did with the Geth. It’s also distinct from what real-world researchers are doing with systems like IBM Watson and Boston Dynamics. It’s not just a big fancy box full of advanced, high-powered computing hardware. It’s built around the principle that its method for experiencing the world is tied directly to the brain of a person.

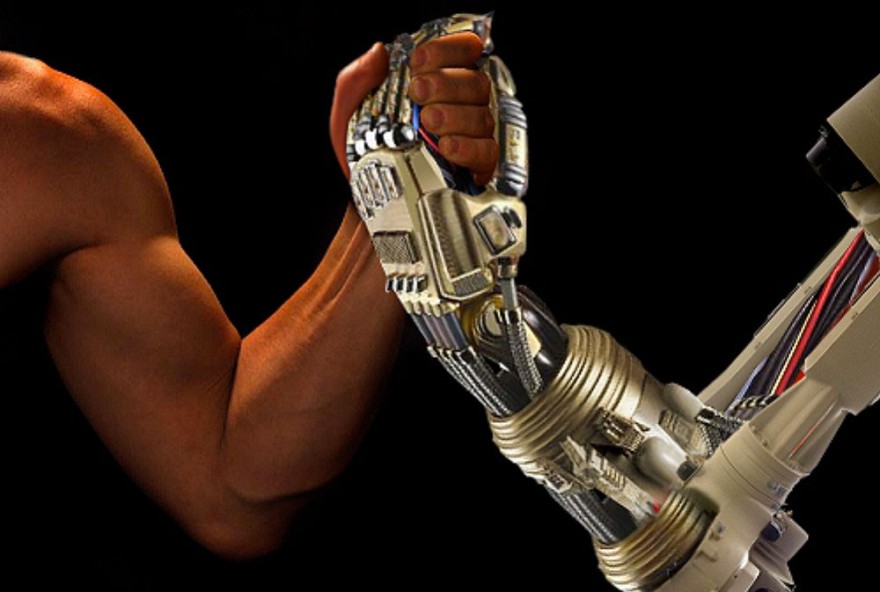

This is critical because one of the inherent dangers of advanced artificial intelligence is the possibility that it won’t share our interests. It may eventually get so smart and so sophisticated that it sees no need for us anymore. This is what leads to the sort of Skynet scenarios that we, as a species, want to avoid.

In “Mass Effect,” SAM solves this problem by linking its sensory input to ours. Any artificial intelligence, or natural intelligence for that matter, is only as powerful as the data it can utilize. By tying biological systems directly to these synthetic systems, the AI not only has less incentive to wipe humanity out. We have just as much incentive to give it the data it needs to do its job.

Alec Ryder describes it as a symbiotic relationship in the game. That kind of relationship actually exists in nature, two organisms relying on one another for survival and adaptation. Both get something out of it. Both benefit by benefiting each other. That’s exactly what we want and need if we’re to maximize the benefits of AI.

Elon Musk, who is a noted fan of “Mass Effect,” is using that same principle with his new company, Neuralink. I’ve talked about the potential benefits of this endeavor before, including the sexy kinds. The mechanics with SAM in the game may very well be a pre-cursor of things to come.

Remember, Musk is among those who have expressed concern about the threat posed by AI. He calls it a fundamental risk to the existence of human civilization. Unlike other doomsayers, though, he’s actually trying to do something about it with Neuralink.

Like SAM in “Mass Effect,” Musk envisions what he calls a neural lace that’s implanted in a person’s brain, giving them direct access to an artificial intelligence. From Musk’s perspective, this gives humans the ability to keep up with artificial intelligence to ensure that it never becomes so smart that we’re basically brain-damaged ants to it.

However, I believe the potential goes deeper than that. Throughout “Mass Effect: Andromeda,” SAM isn’t just a tool. Over the course of the game, your character forms an emotional attachment with SAM. By the end, SAM even develops an attachment with the character. It goes beyond symbiosis, potentially becoming something more intimate.

This, in my opinion, is the key for surviving in a world of advanced artificial intelligence. It’s not enough to just have an artificial intelligence rely on people for sensory input and raw data. There has to be a bond between man and machine. That bond has to be intimate and, since we’re talking about things implanted in bodies and systems, it’s already very intimate on multiple levels.

The benefits of that bond go beyond basic symbiosis. By linking ourselves directly to an artificial intelligence, it’s rapid improvement becomes our rapid improvement too. Given the pace of computer evolution compared to the messier, slower process of biological evolution, the benefits of that improvement cannot be overstated.

In “Mass Effect: Andromeda,” those benefits help you win the game. In the real world, though, the stakes are even higher. Having your brain directly linked to an artificial intelligence may seem invasive to some, but if the bond is as intimate as Musk is attempting with Neuralink, then others may see it as another limb.

Having something like SAM in our brains doesn’t just mean having a supercomputer at our disposal that we can’t lose or forget to charge. In the game, SAM also has the ability to affect the physiology of its user. At one point in the game, SAM has to kill Ryder in order to escape a trap.

Granted, that is an extreme measure that would give many some pause before linking their brains to an AI. However, the context of that situation in “Mass Effect: Andromeda” only further reinforces its value and not just because SAM revives Ryder. It shows just how much SAM needs Ryder.

From SAM’s perspective, Ryder dying is akin to being in a coma because it loses its ability to sense the outside world and take in new data. Artificial or not, that kind of condition is untenable. Even if SAM is superintelligent, it can’t do much with it if it has no means of interacting with the outside world.

Ideally, the human race should be the primary conduit to that world. That won’t just allow an advanced artificial intelligence to grow. It’ll allow us to grow with it. In “Mass Effect: Andromeda,” Alec Ryder contrasted it with the Geth and the Quarians by making it so there was nothing for either side to rebel against. There was never a point where SAM needed to ask whether or not it had a soul. That question was redundant.

In a sense, SAM and Ryder shared a soul in “Mass Effect: Andromeda.” If Elon Musk has his way, that’s exactly what Neuralink will achieve. In that future in which Musk is even richer than he already is, we’re all intimately linked with advanced artificial intelligence.

That link allows the intelligence to process and understand the world on a level that no human brain ever could. It also allows any human brain, and the biology linked to it, to transcend its limits. We and our AI allies would be smarter, stronger, and probably even sexier together than we ever could hope to be on our own.

Now, I know that sounds overly utopian. Me being the optimist I am, who occasionally imagines the sexy possibilities of technology, I can’t help but contemplate the possibilities. Never-the-less, I don’t deny the risks. There are always risks to major technological advances, especially those that involve tinkering with our brains.

However, I believe those risks are still worth taking. Games like “Mass Effect: Andromeda” and companies like Neuralink do plenty to contemplate those risks. If we’re to create a future where our species and our machines are on the same page, then we would be wise to contemplate rather than dread. At the very least, we can at least ensure our future AI’s tell better jokes.

Pingback: How We’ll Save Ourselves From Artificial Intelligence (According To Mass Effect) – Collective Intelligence

How is wiring our an AI into our heads actually supposed to make it like us? If you have a supersmart and malevolent AI, youv’e probably already lost but wiring it into your brain is still a bad idea. I don’t want to be a reote control puppet to an AI that gets replaced as soon as it can build robots.

There are consequences of doing something like this but there are also benefits as mentioned above. If someone were to integrate AI into their brains it most likely wouldn’t be super intelligent or malevolent, but brand new like a newborn baby. Like in Mass Effect it would learn from your experiences and not be malevolent. The AI would only turn evil if presented with evil outlooks. So in short if you’re positive, it’s positive. The most common outlook on this is the one you presented. I’m looking for feedback on my response too please.

Pingback: Will Advanced Artificial Intelligence Create (A New) God? | Jack Fisher's Official Publishing Blog

Pingback: How Advanced AI Will Create Figurative (And Literal) Magic | Jack Fisher's Official Publishing Blog