Picture the following scenario that may or may not end up being a common occurrence in the near future. It’s not a thought experiment. It’s not a prediction either. It’s just a possible manifestation of what our future might hold.

It’s late at night and you decide to check out some porn. You struggle to decide which one you want to watch. You’re in the mood for something new so you search a little more. You find some elaborate scene where Amy Shumer is a transvestite and she’s doing it with Justin Bieber.

Eventually, you settle on the hottest new scene that just came out the other day. It has Kevin Hart, Steph Curry, and Michael B. Jordan all taking turns with Scarlett Johansson in a sauna in Paris. The scene plays out. You love ever minute of it and decide to save it.

I admit that scenario was pretty lurid. I apologize if it got a little too detailed for some people, but I needed to emphasize just how far this may go. It’s an issue that has made the news lately, but one that may end up becoming a far greater concern as technological trends in computing power and artificial intelligence mature.

The specific news I’m referring to involves something called “deep fakes.” What they are doesn’t just have huge implications for the porn industry. They may also have major implications for media, national security, and our very understanding of reality.

In essence, a deep fake is a more elaborate version of Photoshopping someone’s face into a scene. That has been around for quite some time, though. People pasting the faces of celebrities and friends into pictures from porn is fairly common. It’s also fairly easy to identify as fake. The technology is good, but not indistinguishable from reality.

That may be changing, though, and it may change in a way that goes beyond making lurid photos. Computer technology and graphics technology are getting to a point where the realism is so good that it’s difficult to discern what’s fake. Given the rapid pace of computer technology, it’s only going to get more realistic as time goes on.

That’s where deep fakes clash with the porn industry. It’s probably not the biggest implication of this technology, but it might be the most relevant in our celebrity-loving culture. In a sense, it already has become an issue and it will likely become a bigger issue in the coming years.

It started when PornHub, also known as the most popular porn site on the planet, took a major stand at removing deep fakes from their website. Specifically, there was a video of Gal Gadot, also known as Wonder Woman and a person I’ve praised many times on this blog, being digitally added in a porn scene.

Now, it’s not quite as impressive as it sounds. This wasn’t a fully digital rendering of an entire scene. It was just a computer imposing Gal Gadot’s face onto that of a porn actress for a scene. In terms of pushing the limits of computer technology, this didn’t go that far. It was just a slightly more advanced kind of Photoshopping.

Anyone who has seen pictures of Gal Gadot or just watched “Wonder Woman” a hundred times, like me, could easily tell that the woman in that scene isn’t Ms. Gadot. Her face literally does not match her physique. For those not that familiar with her, though, it might be hard to tell.

That’s exactly why PornHub removed it. Their position is that such deep fakes are done without the explicit permission of the person being depicted and constitute an act of revenge porn, which has become a major legal issue in recent years. These are PornHub’s exact words.

Non-consensual content directly violates our TOS [terms of service] and consists of content such as revenge porn, deepfakes or anything published without a person’s consent or permission.

While I applaud PornHub for making an effort to fight content that puts beloved celebrities or private citizens in compromising positions, I fear that those efforts are going to be insufficient. PornHub might be a fairly responsible adult entertainment company, but who can say the same about the billions of other sites on the internet?

If that weren’t challenging enough, the emergence of artificial intelligence will further complicate the issue of deep fakes. That’s because before AI gets smart enough to ask us whether or not it has a soul, it’ll be targeted to performing certain tasks at a level beyond any programmer. Some call this weak AI, but it still has the power to disrupt more than our porn collection.

In an article with Motherboard, an artificial intelligence researcher made clear that it’s no longer exceedingly hard for someone who is reckless, tech-savvy, and horny enough to create the kind of deep fakes that put celebrities in compromising positions. In fact, our tendency to take a million selfies a day may make that process even easier. Here’s what Motherboard said on just how much we’re facilitating deep fakes.

The ease with which someone could do this is frightening. Aside from the technical challenge, all someone would need is enough images of your face, and many of us are already creating sprawling databases of our own faces: People around the world uploaded 24 billion selfies to Google Photos in 2015-2016. It isn’t difficult to imagine an amateur programmer running their own algorithm to create a sex tape of someone they want to harass.

In a sense, we’ve already provided the raw materials for these deep fakes. Some celebrities have provided far more than others and that may make them easy targets. However, even celebrities that emphasize privacy may not be safe as AI technology improves.

In the past, the challenge for any programmer was ensuring every frame of a deep fake was smooth and believable. Doing that kilobyte by kilobyte is grossly inefficient, which put a natural limit on deep fakes. Now, artificial intelligence has advanced to the point where it can make its own art. If it can do that, then it can certainly help render images of photogenic celebrities in any number of ways.

If that weren’t ominous enough, there’s also similar technology emerging that allows near-perfect mimicry of someone’s voice. Just last year, a company called Lyrebird created a program that mimicked former President Obama’s voice. It was somewhat choppy and most people would recognize it as fake. However, with future improvements, it may be next to impossible to tell real from fake.

That means in future deep fakes, the people involved, be they celebrities or total strangers, will look and sound exactly like the real thing. What you see will look indistinguishable from a professionally shot scene. From your brain’s perspective, it’s completely real.

One of these is real and the other is fake. Seriously.

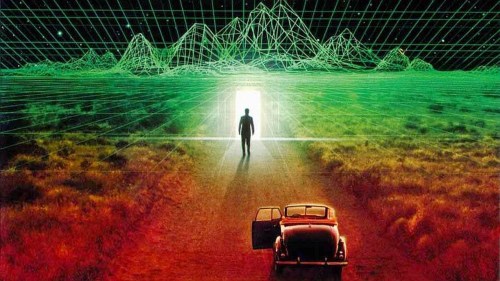

That blurring of virtual reality and actual reality has huge implications that go beyond the porn industry. Last year, I pointed out how “Star Wars: Rogue One” was able to bring a long-dead actor back to life in a scene. I highlighted that as a technology that could change the way Hollywood makes movies and deals with actors. Deep fakes, however, are the dark side of that technology.

I believe celebrities and private citizens who have a lot of videos or photos of themselves online are right to worry. Between graphics technology, targeted artificial intelligence, and voice mimicry, they’ll basically lose control of their own reality.

That’s a pretty scary future. Deep fakes could make it so there’s video and photographic evidence of people saying and doing the most lurid, decadent, offensive things that it’s possible for anyone to do. You could have beloved celebrities go on racist rants. You could have celebrities everyone hates die gruesome deaths in scenes that make “Game of Thrones” look like an old Disney movie.

The future of deep fakes make our very understanding of reality murky. We already live in a world where people eagerly accept as truth what is known to be false, especially with celebrities. Deep fakes could make an already frustrating situation much worse, especially as the technology improves.

For now, deep fakes are fairly easy to sniff out and the fact that companies like PornHub are willing to combat them is a positive sign. However, I believe far greater challenges lie ahead. I also believe there’s a way to overcome those challenges, but I have a feeling we’ll have a lot to adjust to in a future where videos of Tom Hanks making out with Courtney Love might be far too common.

Reblogged this on Quaerere Propter Vērum.

Pingback: Who Will Be The First (Digitally) Immortal Celebrity? | Jack Fisher's Official Publishing Blog

Pingback: Deep Fake Technology Can Now Make Tom Cruise Iron Man: Signs And Implications | Jack Fisher's Official Publishing Blog

Pingback: The Exciting/Distressing World Of AI-Generated Art | Jack Fisher's Official Publishing Blog